Wherever we see around today, we’ve been surrounded by AI in digital media and devices.

Now it helps most students ace their homework in a matter of minutes, engineers debug code or even content creation (who knows, the next version of this blog could be generated by AI 😅).

But there is a catch. This really pushes us to be creative in our respective fields to stay relevant in the age of AI.

We wanted to see if this Generative AI is energy intensive i.e. how much energy ChatGPT (Generative Pretrained Transformer) introduced by OpenAI is consuming?

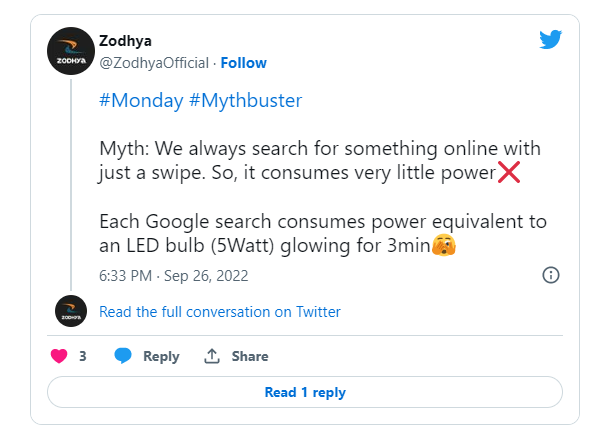

Everything we search online is bound to consume some energy, right from our Google search to the latest series we stream on OTT. Because our internet searches are run on servers, which are a stack of hardware devices communicating with each other, matching with the right options on the web and displaying it for us.

In fact, here’s the consumption of a Google search as mentioned in our blog earlier:

So how much does ChatGPT consume for a query then?

To start with, we asked ChatGPT itself. Easy, isn’t it?

But this is what it threw up. A long essay with difficult words to circumvent and no clear answer.

Here’s a brief of how ChatGPT works

ChatGPT’s AI model has been trained on various types of content and data. It was reportedly trained with 175 billion parameters!!

And it states that energy-efficient modelling hasn’t been completely implemented yet.

These models are usually trained on processors like Nvidia V100 GPUs, known to consume intense energy of about 300W as per its datasheet. For comparison, it is the equivalent power rating of 5 ceiling fans combined.

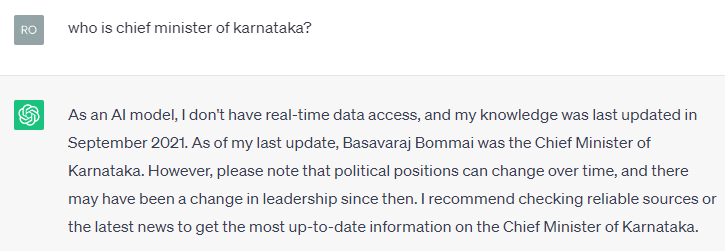

It also says that it has been trained for data till September 2021. So, if you go ahead and ask any question relevant to the latest stats, it may not be able to give you an answer. In fact, we tried asking a question and this is what it showed up

As per this research paper, it took 405 V100 years to train the GPT-3 model or in other terms for 1 V100 GPU, it would take 405 years.

So, the energy consumption train the GPT-3 model is

300W (V100 GPU power consumption)* 24hrs per day* 365 days per year * 405 years = 1,064,340,000 Wh or nearly 1064 MWh

It doesn’t stop at how much energy was used to train the model but also how much energy is being used to run the model every time a question is asked on its platform.

For any platform to operate search requests, it would require servers to operate. As per the article by Semianalysis, OpenAI requires about ~ 3617 HGX A100 servers. Each server is known to consume about 3000W. And ChatGPT is estimated to receive 195mn queries as per the article (15 responses*13mn active daily users).

So that means, the energy consumption to satisfy search requests for a given day = 3617 (HGX A100 servers) * 3000W * 24 hrs = 260,424,000 Wh or 260.42 MWh

In order to satisfy these 195mn queries, ChatGPT has been trained with 1064 MWh + run on servers by consuming energy of 260.42 MWh per day.

So, for each query, the energy consumed is = (1064+260.42) MWh / 195 mn = 6.79 Wh ***

Or in simpler terms, each query on GPT-3 consumes the equivalent amount of energy of running a 5W LED bulb for 1hr 20min!

Think about what the consumption could be if it starts onboarding more users with this architecture.

So, the impetus now should be on making such platforms energy efficient and also the source of energy supply to be environment friendly, isn’t it?

Here’s something on GPT-4

As per this article, the GPT-4 model was trained on 25,000 Nvidia A100 GPUs for about 90-100 days, each GPU rated at 300W- 500W. Since, there was an advancement in architectures on how the models can be trained, let’s assume it to be trained at a 300W rating most time.

The energy consumption for training GPT 4 = 25,000 * 300W * 90 days * 24 = 16,200,000,000 Wh or 16,200 MWh

As per DemandSage, GPT-4 processes over 1bn queries every day. And according to Towards Data Science, ChatGPT uses 3200 servers of Nvidia DGX A100, which come with a rating of 6.5 kW.

So that means, the energy consumption to satisfy search requests for a given day = 3200 servers * 6500W * 24 hrs = 499,200,000 Wh or 499.2 MWh

In order to satisfy these 1bn queries, ChatGPT has been trained GPT-4 with 16,200 MWh + run on servers by consuming energy of 499.2 MWh per day or 0.49Wh per query

For each query, the entire energy consumed is = (16200+499.2) MWh / 1000 mn queries per day = 16.7 Wh ***

Other considerations to be included, but subject to speculation, are:

- The Data centre Power Usage Effectiveness (PUE) in which the hardware runs. PUE is a ratio that describes how efficiently a computer data centre uses energy, in which the ratio varies between 1.18 to 2.0. Since we are unaware of the details of where ChatGPT was trained on, we cannot add this consumption of the entire data centre, but rather go for server consumption only

- As per this article, the GPUs for GPT-4 were utilised with 32% to 36% efficiency, meaning not all of their capacity was fully used all the time, likely due to the need for other processes like data pre-processing or synchronisation between GPUs.

- Sam Altman, CEO of OpenAI, in his blog notes that ChatGPT consumes 0.34 Wh per average query, which could be possible considering the different services OpenAI offers via its platform and excluding the energy consumed for training

And as we write this, GPT-5 is launched.

**We tried to arrive at the estimate with the best of the public resources we’ve got. Open to any comments that guide to better estimates and in turn, better knowledge for our readers.

***As one of our readers pointed out, it is unwise to divide the energy consumed for training ChatGPT (1064 MWh) over just a number of queries in 1 day. Because the same trained algorithms can be reused multiple times over days. As it is tough to keep count of ChatGPT queries over time, we suggest taking 1064 MWh as the One-time Energy consumption for training and 260.42 MWh/day as the running energy consumption to satisfy queries over a single day (24 hours). These numbers could keep changing over time as ChatGPT comes up with new versions. This blog is only an attempt to give an idea of energy consumption in such world-changing applications.